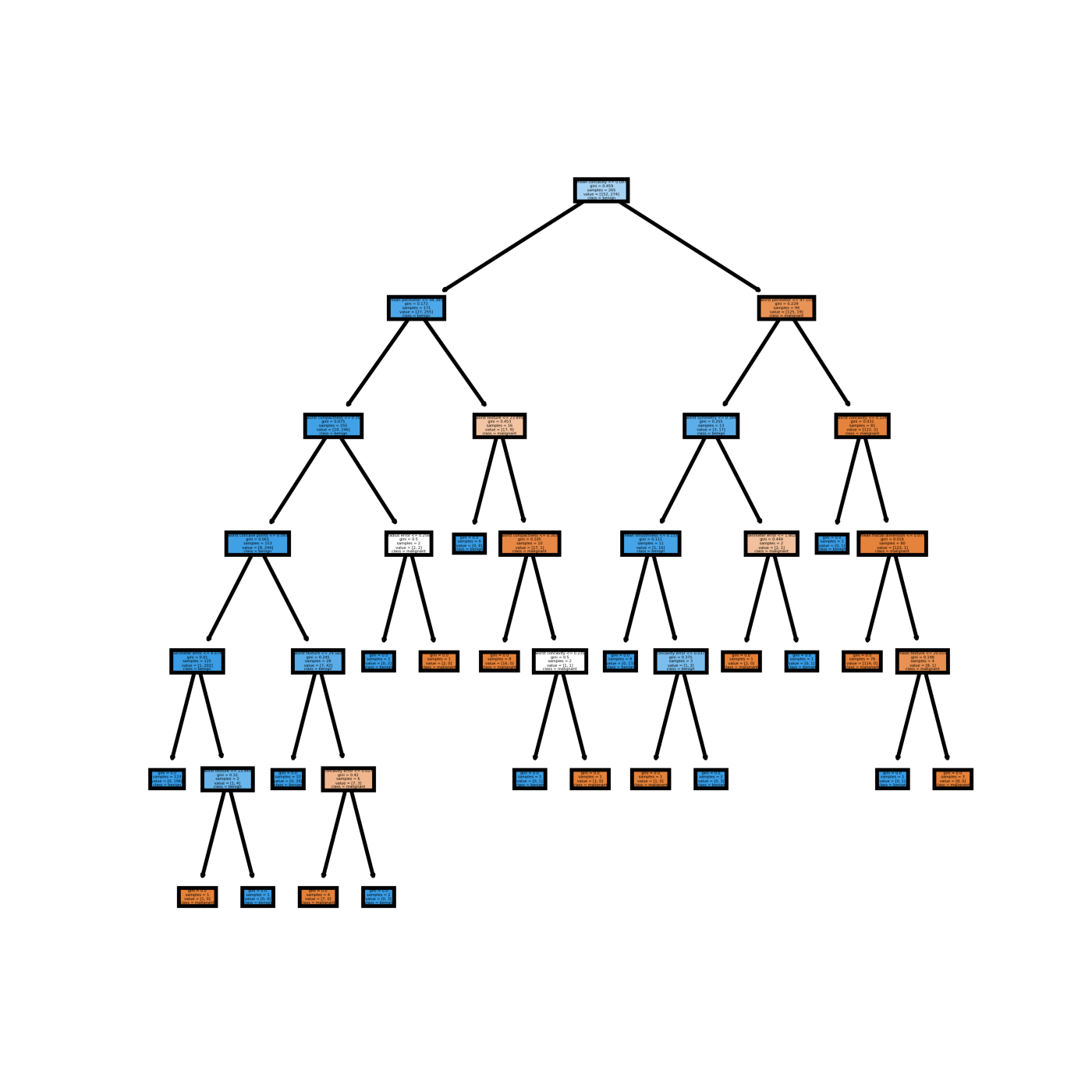

Building a tree can be divided into two parts:Ī terminal node, or leaf, is the last node on a branch of the decision tree and is used to make predictions. Now that we have the root node and our split threshold we can build the rest of the tree. So, the midpoint threshold is (6.642 + 3.961) / 2 = 5.30! Our root node is now complete with X1 < 5.30, a Gini of. We could use 6.642 as our threshold, but a better approach is to use the adjacent feature less than 6.642, in this case X1 = 3.961 (class 0), and calculate the midpoint as this represents the dividing line between the two classes. If we look at the Gini scores, the lowest is. Running this algorithm for each row gives us all the possible Gini scores for each feature: Feature The Gini of the split is the weighted average of the left and right sides (1 * 0) + (9 * 0.49382716) =.For class 1, the split is 0 to the left and 5 to the right (zero items 1.72857131).For class 0, the split is 1 to the left and 4 to the right (one item 1.72857131).Sorting X1 in ascending order we get the first value of 1.72857131.Let’s run through one example of calculating the Gini for one feature: We then need to evaluate the cost of the split (Gini) and find the optimal split (lowest Gini). In order to determine the best split, we need to iterate through all the features and consider the midpoints between adjacent training samples as a candidate split. Our goal then is to use the lowest Gini score to build the decision tree. If the Gini score were 0, then 100% of our dataset at this node would be classified correctly (0% incorrect). So 50% of our dataset at this node is classified incorrectly. 5 - an equal distribution of rectangles in the 2 classes. Using our simple 2 class example, the Gini index for the root node is (1 - ((5/10)^2 + (5/10)^2)) =. The Gini index calculates the amount of probability of a specific feature that is classified incorrectly when randomly selected and varies between 0 and. Since the sum of p is 1, the formula can be represented as 1 - sum(p squared). The sum of p(1-p) over all classes, with p the proportion of a class within a node. The Gini index is a cost function used to evaluate splits. Using the simple example above, how did we know to split the root at a width (X1) of 5.3? The answer lies with the Gini index or score. The key to building a decision tree is determining the optimal split at each decision node. In this very simple example, we can predict whether a given rectangle is purple or yellow by simply checking if the width of the rectangle is less than 5.3. Their corresponding Gini score, sample size and values are updated to reflect the split. All the purple rectangles (0) are in one leaf node and all the yellow rectangles (1) are in the other leaf node. The fourth line represents the number of items in each class for the node - 5 for purple rectangles and 5 for yellow rectangles.Īfter splitting the data by width (X1) less than 5.3 we get two leaf nodes with 5 items in each node. The third line represents the number of samples at this initial level - in this case 10. The second line represents the initial Gini score which we will go into more detail about later. The first line of text in the root depicts the optimal initial decision of splitting the tree based on the width (X1) being less than 5.3. In the decision tree below we start with the top-most box which represents the root of the tree (a decision node). Decision trees are made up of decision nodes and leaf nodes. Graphing the rectangles we can very clearly see the separate classes.īased on the rectangle data, we can build a simple decision tree to make forecasts. The data is shown below with X1 representing the width, X2 representing the height and Y representing the classes of 0 for purple rectangles and 1 for yellow rectangles: Five of the rectangles are purple and five are yellow. Let’s say we have 10 rectangles of various widths and heights. While this article focuses on describing the details of building and using a decision tree, the actual Python code for fitting a decision tree, predicting using a decision tree and printing a dot file for graphing a decision tree is available at my GitHub.

First, let’s start with a simple classification example to explain how a decision tree works. We are going to discuss building decision trees for several classification problems. Its popularity is due to the simplicity of the technique making it easy to understand. Decision trees also form the foundation for other popular ensemble methods such as bagging, boosting and gradient boosting. A decision tree is a popular and powerful method for making predictions in data science.